In Ernest Cline’s dystopian novel Ready Player One, the world’s population is addicted to a virtual reality game called the OASIS. The villain in the book is a large communications company named IOI that will stop at nothing to rule the world—the OASIS virtual world, that is. IOI’s motivation is, simply put, profit, profit, and more profit as it peddles its goods and services in the digital reality. Through subterfuge, spying, rewards, and an assortment of other tactics, IOI gathers intelligence on its users, competitors, and enemies, and then uses that information to its advantage.

But even in a fully-connected, always-on digital world such as the OASIS, people have effective tools against IOI’s tracking. They lie. They throw up roadblocks. They create alternate selves. They create private rooms to hold clandestine chats. They go underground. They disconnect.

In a 2013 survey by Pew Research Center, 86 percent of Internet users stated that they had attempted to minimize their digital footprints by taking affirmative steps such as deleting cookies, using a false name or email address, or using a public computer to mask their identities.1 A 2015 survey by TRUSTe/National CyberSecurity Alliance found that 89 percent of consumers refuse to do business with a company that does not protect their privacy.2 Those are just two of dozens of surveys showing similar metrics.3

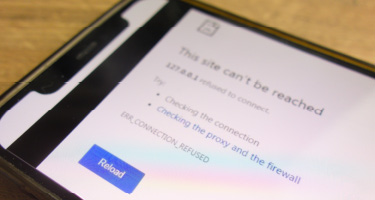

In response to users’ privacy concerns over the past decade, consumer-friendly privacy protection tools continue to make their way into the marketplace. For example, VPN privacy protection add-ons are now readily available for web browsers, and some browsers, such as Opera, come with a free VPN built directly into the browser.4 Ad blockers have become so popular that some websites are restricting access if a browser blocks ads on the site. And privacy-conscious search engines like DuckDuckGo continue to gain loyal users.6

So what does this have to do with the legal intricacies of data privacy? A lot, actually. As demand increases for privacy tools, more companies are meeting that demand in new and innovative ways. Although the privacy risks inherent in artificial intelligence (AI) are well-documented, we are also seeing companies develop AI applications designed to help protect consumer privacy by creating digital noise, or obfuscation, around a person’s online activities. These tools essentially create new layers of false interests and pretend preferences tied to an individual’s online persona, which makes it more difficult for marketers to know which preferences and opinions are true and which are false. Expect to see a variety of AI-powered obfuscation and other related tools and services arriving over the next few years as consumers attempt to distract data collectors from real data.

Whether or not these new tools and services are legal will be the subject of much debate, especially by any company being thwarted in its efforts to collect reliable information about a user. Some of these tools will also present novel legal issues related to AI, such as whether an unmonitored chatbot can create a legal contract on behalf of its owner (probably) or whether the owner of an AI tool is always responsible for its activities, even if the AI tool acts contrary to its owner’s instructions (maybe). Then there are the questions of who’s guarding the guards and whether these new privacy tools will eventually be used to collect even more information from consumers.8

In the future, we will certainly see new legislation, regulations, and court holdings affecting how companies and third parties may use personal information of individuals. But technical innovation is much faster and more responsive to consumer demand. As consumers desire better protection for their information, expect to see more privacy tools emerge to help control the types and amounts of data shared with companies and marketers. And as this develops further, these new tools will undoubtedly bring new legal questions and challenges.

------------------------

2 https://www.truste.com/resources/privacy-research/ncsa-consumer-privacy-index-us/

3http://www.pewinternet.org/search/?query=privacy; https://epic.org/privacy/survey/; https://www.law.berkeley.edu/research/bclt/research/privacy-at-bclt/berkeley-consumer-privacy-survey/

4http://www.opera.com/computer/features/free-vpn

5https://www.pubnation.com/blog/publishers-fight-back-how-the-top-50-websites-combat-adblock

6http://www.digitaltrends.com/web/duckduckgo-14-million-searches/

https://www.nyu.edu/projects/nissenbaum/papers/Politicalandethicalperspectivesondataobfuscation.pdf

8In 2016, a popular browser add-on ironically named “Web of Trust” was discovered to be collecting and selling information about its users (see http://www.pcmag.com/news/349328/web-of-trust-browser-extension-cannot-betrusted). In 2017, an inbox management service called Unroll.me was sued for selling user data gleaned from users’ inboxes (see https://www.cnet.com/news/unroll-me-hit-with-privacy-suit-over-alleged-sale-of-user-data/).